Video games in good company since 1958

As with all things in life, video games are best when shared with others. But despite the medium’s rich history and current resurgence of multiplayer games, a tired stigma remains:

Video games are played in isolation, and thus perpetuate social retards.

“There is still this mindset that video games are lone wolf activities for like-minded groups of nerds,” says Troy Goodfellow, a freelance critic for nearly a decade. “But on the contrary, they build connections better than a lot of people think.”

And he’s right. Video games actually started as multiplayer activities. In 1958, American physicist William Higinbotham created Tennis for Two, widely regarded as the first video game. As its name implies, the simulation game was designed to entertain multiples — it was intended to be social.

Fifteen years later, Atari would release the first commercially successful video game, Pong. It, like Tennis for Two, was innately multiplayer. Later that year, Magnavox released the first home video game console, the Odyssey, which shipped with two controller ports. Since then, virtually every home console has supported two-to-four controllers, if not more through the use of adapters.

What’s more, party games, cooperative play, media coverage, and online multiplayer are more pervasive today than ever before.

That’s the factual history. Here’s the science.

In 2004, MIT professor Henry Jenkins found that nearly 60 percent of ardent gamers play with others. According to a December 2007 report from The NPD Group, which tracks U.S. video game sales, more than half of both dedicated and casual players enjoy playing games with family and friends, while 48 percent believe gaming can can enhance relationships and bring families closer together.

In 2006, a joint study by the University of Wisconsin and the University of Illinois found that online multiplayer games can actually increase sociability, much like Facebook or MySpace being used to network with others over the internet. In June, a researcher at Victoria University made a strong case that gamers are not the lonely losers that many assume them to be.

So how did video games earn the reputation for being exclusively hermetic?

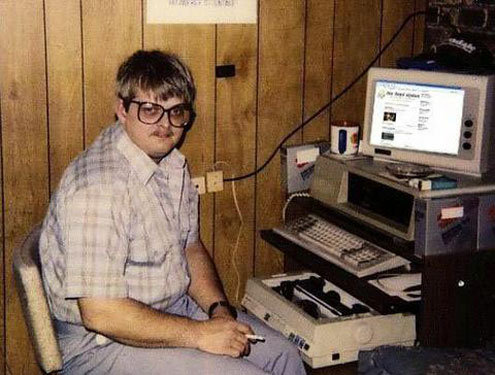

Photo of a typical video game player circa 2008.

Photo of a typical video game player circa 2008.

First, single-player games are still the preferred choice for conventional players, which dominate the voice of video games despite being smaller in number when compared to the majority. The same NPD report cited above found that 62 percent of core gamers prefer playing games alone, while 46 percent of casual gamers (easily the fastest and largest group) fancy the same.

Second, video games are still widely considered an activity for kids, despite the average gamer being 33 years old, according to the Entertainment Software Association. As a result, the inability of our youth to self-moderate their playing times regrettably gives the rest of us a bad rap (though many adult gamers have a hard time putting down controllers too).

And third, hobbit gamers do exist, giving a false impression to outsiders that video games (and not a lack of social graces) are to blame for such extreme and eccentric behavior.

To this day, the reality is that video games have and continue to be a predominantly multiplayer activity when considering all players and genres. Video games are more social than movies. They are bigger than the music industry. And they are a lot more human and mature than baby boomers would like to admit. It just may take some time — or at least the dawning of a new generation — before video games are seen as such.

“In five or six years, the gaming population is going to look very different than it does right now,” maintains Goodfellow. “Today’s graduating classes have never known a life without gaming as a mainstream, acceptable activity. That will affect business, media coverage, design, everything — and more radically than people think.”